Apart from the naming and their location, there are several other differences between a Level 1 cache, Level 2 cache and Level 3 cache memory.

You will need to know all these differences so that you can easily determine which among these three caches is most important, if at all.

If you want to avoid the hassles of research but still want to know about them, this is an article that is right for it. Read it to know the major differences and their respective importance.

In This Article

KEY TAKEAWAYS

- The L1 cache memory is called the internal or primary cache, L2 cache memory is called the secondary cache and the L3 cache memory is called the external cache, all depending on their location.

- The L1 cache memory is the fastest and the L3 cache memory is the slowest but is faster than the main system memory.

- The capacity of the Level 1 cache memory is the lowest while that of the Level 3 cache memory is the largest.

- The access time of the Level 1 cache memory is lowest and that of the Level 3 cache memory is the highest.

L1 Cache vs L2 Cache vs L3 Cache – The 8 Differences

1. Type or Cache Nomenclature

The Level 1 cache memory is often referred to as the internal or primary cache as it resides within the CPU.

The Level 2 cache memory, on the other hand, is often referred to as the secondary or external cache being located out of the CPU cores.

And, the Level 3 cache memory is simply referred to as the external cache since it is located outside on the motherboard.

2. Location

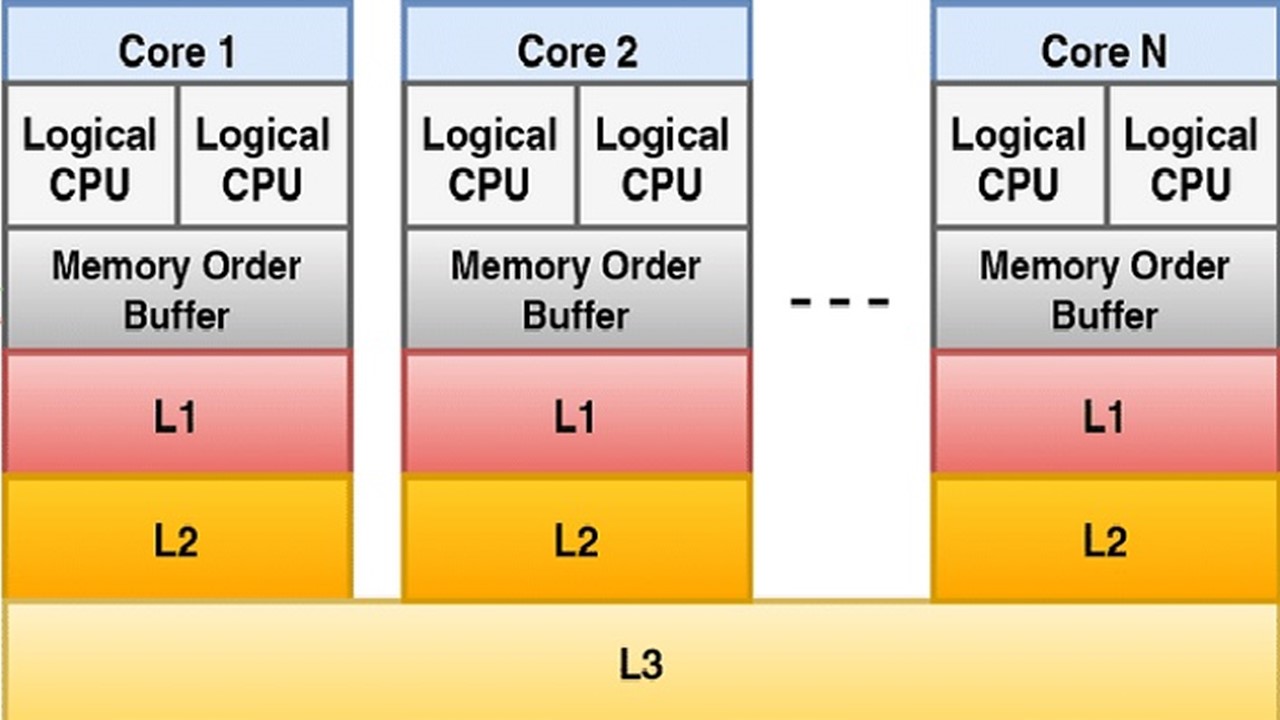

The Level 1 cache memory is located closest to the core being actually within the core.

Therefore, the L1 cache memory is dedicated to each CPU core which means that each of them have their own L1 cache memory.

The Level 2 cache memory, on the other hand, is actually located outside the Level 1 cache memory but within the CPU chip which means that each code in it has its own L2 cache memory.

However, on a few specific types of CPUs it may be dedicated to one particular CPU core but this is not a necessity.

And, as for the Level 3 cache memory, it is shared by all the cores of the processor on one single chip that is located outside the CPU and usually on the motherboard in the processor module itself.

3. Speed

The Level 1 cache memory is the fastest among all these three cache memories taking just 1 to 2 cycles to fetch data.

The Level 2 cache memory, on the other hand, is slower than the Level 1 cache memory taking about 15 cycles to fetch data.

However, it is faster than the Level 3 cache.

And, the Level 3 cache memory is the slowest of all these three types taking about 40 cycles to fetch data.

However, this is faster than the main memory of the system.

4. Capacity

The Level 1 cache memory is the smallest in size among all these three cache memories.

In most of the x86 CPUs, the L1 cache measures about 64 KB.

The Level 2 cache memory, on the other hand, is larger in size as compared to the Level 1 cache memory but is usually smaller in size in comparison to the Level 3 cache.

In most of the x86 CPUs, the L2 cache measures about 512 KB.

And, the Level 3 cache memory is the largest among these three cache memories.

In most of the x86 CPUs, the L3 cache measures in tens of MBs.

5. Contents

The Level 1 cache memory holds all the necessary data that are used frequently by the CPU to execute the requests made by the system but will not store anything belonging to the L2 and L3 cache memories.

The Level 2 cache memory, on the other hand, holds the data and instructions that are not stored in the Level 1 cache memory.

And, the Level 3 cache memory contains all the data that are not stored in the L1 and L2 cache memories.

6. Design

The Level 1 cache is usually split into two separate parts namely the L1 Data Cache and the L1 Instruction Cache.

The data cache stores the data that is to be written back to the main memory and the instruction cache stores the instructions that are typically used by the CPU core.

On the other hand, the Level 2 cache and the Level 3 cache are typically not divided into such parts.

7. Access Times

As far as the access time is concerned, it is between 2 to 8 nanoseconds in the case of the Level 1 cache memory.

On the other hand, the access time as for the Level 2 cache memory is usually 3 to 10 nanoseconds.

And, in the case of the Level 3 cache memory the access time is normally 10 to 20 nanoseconds.

8. Accessing Sequence

The CPU accesses the Level 1 cache memory at first to look for the necessary data for its operations.

If the data is not available in the L1 cache memory, the CPU accesses the Level 2 cache memory next for it.

And, if the data is not available in the Level 2 cache as well, the CPU will access the Level 3 cache memory as its last attempt before moving on to the main memory of the system for the same.

Which One is Better to Have?

This question is best answered in three simple words: ‘All of them.’

Well, like most of the users you too may argue and cast your vote in favor of the L1 cache memory but a closer and deeper look into things will reveal some unknown and amazing facts.

It is true that a high speed cache is quite important for a CPU to perform well and so the Level 1 cache has a slight edge over the other cache levels being the fastest of them all.

Moreover, the fact that the L1 cache memory is accessed by the CPU may make you feel that the other two levels of cache memories are not required and is a sheer waste of space and power.

The Level 1 cache is divided into two parts such as data cache and instruction cache.

The data cache stores the data on which the CPU has to perform an operation and the instruction cache holds the information about these operations.

It holds the branching information as well as the pre-decode data. The data cache performs as an output cache and the instruction cache as input cache.

This ensures that all the necessary instructions and information are available close to the fetch unit when loops are engaged.

The L2 and L3 caches are not split like the L1 cache memory but both are larger in size.

However, there is no denial of the fact that without the proper support provided by the Level 2 and Level 3 cache memories, the Level 1 cache will simply not be able to complete its tasks as well as it is expected to do in the real world.

There are several reasons to say so but the most significant one among them is the algorithms that are usually used to make the cache systems.

As you may know, high speed RAMs are quite expensive and when these are used on the motherboard, it invariably increases the cost of it to the range of 50 to 200 dollars.

Users typically shy away from spending this additional amount on a motherboard.

Therefore, the manufacturers try really hard to select specific algorithms that will offer the highest bang for the buck, so to speak.

They therefore make the size of the Level 1 cache memory small. The smaller the size of the cache, the harder it will be to work.

This inevitably raises the demand for additional L2 and L3 cache memories.

In the real world, in terms of cache performance, there can be either cache hits or cache misses.

These are specific conditions in which the system cache may have or may not have the required data or instructions for the CPU.

Therefore, a cache hit is when the CPU accesses the cache and gets the required data to run its workload as well as the operating system at full speed.

On the other hand, a cache miss is a situation when the CPU does not get the required data instantly but has to sit idle in wait states while the memory system reloads that data into the cache memory or it may look for it in other places.

This unwanted delay is reduced, if not eliminated completely, by dividing the memory system into different pieces and treating each of them as individual entities.

It is all about state machines, digital logic and cache algorithms.

The system, in such situations, has only one particular job to do which is to ensure that a copy of the chosen or required memory locations is made available in these small high speed cache memories.

This will help the CPU every time it needs such data since what needs to be cached is kept changing while the programs keep on running.

Therefore, it is better to have all of them because if you have only one type of cache memory it has to be larger in size to store all the relevant and required data for the CPU to access.

Feasible as it may sound, it involves a significant trade-off – the larger the size of the cache, the higher the latency will be.

This means that if the cache is larger, it will take much longer to search and retrieve the necessary data from it.

You will know the reason for it if you think about the larger network of transistors that needs to be branched out so that more memory cells are covered.

That is why the manufacturers use multiple levels of cache memory with the first one being small in size and the fastest to take just 1 to 2 CPU cycles and the other levels progressively getting larger and slower.

Each of these levels is accessed by the CPU to operate faster until it finally has to reach the main memory.

This memory needs to work on hundreds of cycles in order to salvage the data from it and therefore reduces the performance speed of the system overall.

In short, the manufacturers basically use a probability theory that helps in undoing the trade-off between latency and cache size.

This makes all the three levels of cache memories so important to have in a processor.

However, all these are generalities. The placements of the caches typically depend on the details and specs of the board as well as the interconnect architecture.

With the increase in the density of the cores on a chip, you may even see more cache levels in a CPU in the future.

There may also be other solutions used other than the additional cache levels such as Non-Uniform Memory Access or NUMA nodes.

Conclusion

Therefore, as it is pointed out by this article, the L1 cache memory is the fastest in comparison to the L2 and L3 cache memory.

However, all are faster than the main memory of the system which makes all of them useful and effective to meet all of your computing needs.