In This Article

What is NVMe (Non-Volatile Memory Express)?

NVMe refers to a memory host controller specification of a logical and open device interface to access non-volatile storage media. This is a transport and storage access protocol designed for next-gen SSDs.

It is an alternative to ATA and SCSI standard that offers faster access, higher IOPS and reduced latency by using a PCI Express bus.

KEY TAKEAWAYS

- The NVMe storage protocol connects the memory subsystems to the host and offers high performance, NUMA optimization and scalability.

- The specific features that make NVMe so useful are SSD parallelism, updated bus, single queue and RDMA support, controller memory buffer and multi-host provisions.

- This specific protocol streamlines the command sets to enhance the performance of the SSDs.

- NVMe also helps in power management and command enhancements due to its features which also offers adequate security.

Understanding NVMe (Non-Volatile Memory Express)

NVMe or Non-Volatile Memory Express is a storage protocol. The primary objective of it is to connect the memory subsystem to the host.

However, it is just not that. It is a protocol that:

- Ensures high performance,

- Is also Non-Uniform Memory Access or NUMA optimized, and

- Is highly scalable.

This is a comparatively new standard that comes with a lot of new features.

Most importantly, it is designed to connect the NAND SSDs persistently and other NVM media to the CPU directly using a PCIe interface.

The architecture and functionality of the protocol is heavily dependent on the high-speed PCI Express lanes.

These lanes, especially the ones like the PCIe Gen 3.0 link, can reach up to a speed which is more than double the standard SATA interface.

The NVMe speeds and output are different and can be classified into different rates.

This categorization is done on different attributes and working process of the NVMe. For example:

- For up to 64,000 queues, the architecture of NVMe uses PCIe for the purpose of mapping operations. It uses a shared memory, optimizes the input-output and simplifies the internal software.

- To top the speed in comparison to other formats, the features of the NVMe are exceptionally helpful. These formats include SATA SSD, legacy SAS and the SAS or SATA HDDs.

- The top rated and fastest NVMe drives are typically available to the larger enterprises or the OEMs. These can read at a speed of 3 GB/s and write at the rate of 1 GB/s. These drives can also deliver random read IOPs of over 300,000 and write IOPs within the range of 40,000 to 50,000.

- The SSD transfer rate is best for the mid-sized data center and NVMe drives that do not need to work at this lightning speed. These drives can attain a progressive write speed of 1900 MB/s, random writes of 50k IOPs, and reads of 540k IOPs.

It is the architecture of the NVMe that determines the speed.

It also reduces the latency rates to make sure that it stays under 20 microseconds constantly, and, for some, even half of that rate.

All these numbers are very impressive as per the legacy standard as well as the NVMe form factors.

This ensures that the specs are distributed through the U.2 connector in standard size PCI Express expansion cards, or in a form factor that is 2.5” in size and has a four-lane PCIe interface.

This U.2 connector is a popular choice due to several good reasons such as:

- It is easy to deploy

- It can connect the SSDs to one distinct host and

- It can work with PCIe, SATA, and SAS.

As for the U.2 drives, it has multiple lanes that enhance its performance. For example, there are:

- 4 PCIe lanes

- 2 SAS lanes and

- 1 SATA lane.

All these lanes provide a better and broader support to the interface, especially in the 2.5” form factor.

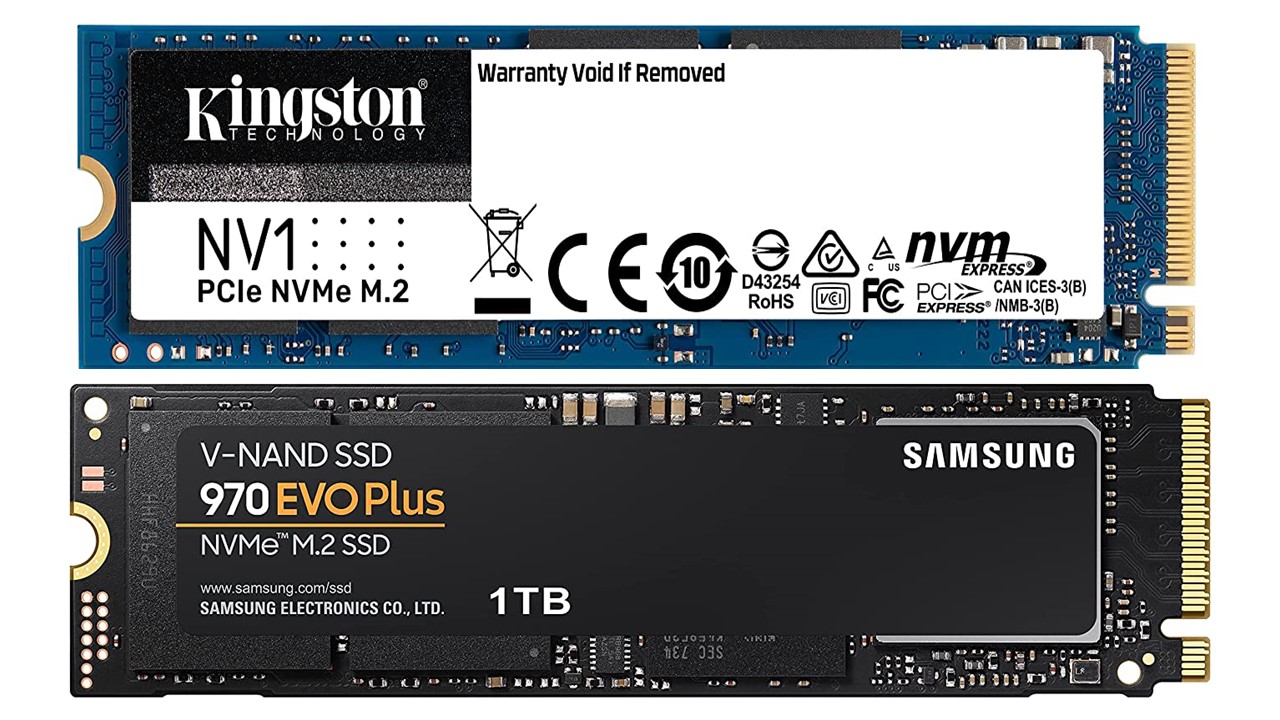

On the other hand, the NVMe usage in the consumer level is also growing. Here the mini-board M.2 specifications are used for different form factors such as:

- PCIe

- SATA and

- USB.

The good thing about the M.2 boards is that these are available in different sizes which ensures a smaller PCIe footprint if you use the smallest board.

Depending on the boards selected, the storage capacity on the NVMe disks can be anywhere between 450 GB at the consumer level usage, and up to 11 TB or more for use by the data centers.

The specific features that enhances the speed of the NVMe are:

- SSD parallelism in the architecture that helps in reducing the I/O overhead. This feature ensures sequential data transfer rather than random transfer. This inevitably speeds up the performance rate of the HDDs and tape. All these are very sensitive to the access pattern and therefore, when the SSDs operate parallelly, it will have little or no effect on its performance. This is true when it is dealing with sequential or random data transfer.

- The updated bus can work even better and the hybrid flash collections and SSD tiers can take the full advantage to increase the speed. It can be more efficient when NVMe is deployed. This is a less expensive method as compared to using a set of high-power RAM and multicore processors.

- NVMe enhances the performance which, in turn, enhances the speed of the interface due to the capacity to support a single queue of 64K. This also helps in processing these long queues simultaneously. This means that the busy servers will have lower latency while processing several requests at the same time.

- RDMA or Remote Direct Memory Access employed by NVMe using PCIe bus makes the interface more responsive to the host shared memory. It also helps in mapping I/O commands. Eventually, this frees up a lot of resources for the CPUs by streamlining the command set. This means that the NVMe will help in delivering less than half of the instructions of the CPU as SAS or SATA.

- The controller memory buffer in the NVMe allows the host to formulate the commands in it. This means that there will be no dependence on the commands that need to be fetched via the PCIe. Instead of the SCSI commands, the NVMe permits memory blocks and therefore, helps in reducing latency eventually. It also judges the priority commands based on the parameters enumerated in the service level agreement.

- Finally, NVMe provisions multi-host uncertainties in Windows Clusters. This helps in proper management of the shared namespaces, which further helps in coordinating with the host access.

There are also a few added features in the NVMe structure that are more advanced and support security container commands, command enhancements, and power management. There is also a host memory buffer that helps in managing the NVMe of the client and mobile.

What Are the Benefits of NVMe?

There are lots of benefits of NVMe storage and the most significant of all is that it saves a lot of time.

Time is essential for the enterprise data centers as that determines and affects the productivity directly. Apart from that, the list of benefits includes:

- It will leverage the SSDs as well as all those multicore processors that you get today. It will also leverage lots, in fact, gigabytes, of memory much unlike the traditional protocols for the mechanical hard disk drives.

- It will streamline the command sets as well to take the full advantage to manipulate and parse data most efficiently.

When it comes to the latest NVMe over Fabrics or NVMe-oF, it will provide more efficient and faster transfer of data between the servers and the storage systems.

The NVMe-oF will enhance the latency benefits and performance of the NVMe.

This benefit will be enjoyed irrespective of the type of network fabric and include:

- Ethernet

- InfiniBand and

- Fiber Channel.

The NVMe will provide higher IOPs from the data fabric to the assembly of storage.

This is a significant benefit to the financial services, life science industry and the energy companies. This is how:

- The energy companies depend on low latency, high performance, and fast HPC. This helps them to make complex calculations much faster.

- This is extremely helpful for the financial services as it will help them to expedite large numbers of transactions. When NVMe is used as the secondary memory it will reduce, if not eliminate, the CPU wait times.

- In the life sciences industry the performance in testing and compiling the results is six times faster and better in comparison to SATA.

Therefore, in spite of the increase in cost, using NVMe storage will provide a favorable return on investment.

Questions & Answers:

What does NVMe stand for?

NVMe stands for Non-Volatile Memory Express. This is the latest standard for high speed storage media. This new protocol brings in several significant advantages while accessing storage media.

Better than the other legacy protocols, the NVMe protocol is very important for all those businesses that are typically data-driven.

What is NVMe ready?

NVMe ready means it will help in dealing with the tail latency successfully. It is also said ready in the sense that the standard NVMe is poised to make a giant leap from its standard solution to NVMe-over-Fabrics or NVMe-oF solutions.

These solutions typically transport data through fiber channels and ensure that the traffic performs well within its tolerable limits.

Is NVMe and M.2 the same thing?

Certainly not. NVMe is the software interface that allows SSDs to operate though the PCI Express directly. It is an alternative to the traditional SATA interface.

M.2, on the other hand, is a form factor. It indicates the physical shape and size of the card plugged into the slot and helps in maximizing spaced and reducing footprint.

Which is faster NVMe or M.2?

NVMe is much faster than M.2 because it uses four PCIe lanes for data transfer. This allows it to reach a speed of 3500 MB/s for reading and for writing, the speed is up to 2500 MB/s. In comparison to the SSD and M.2, this speed is about six to seven times more.

Conclusion

The bottom line is that NVMe or Non-Volatile Memory Express, which is also known as Non-Volatile Memory Host Controller Interface Specification or NVMHCIS, is the standard that uses the working prototypes of PCIe and Ethernet-based connectivity.

It is considered to be one of the most advanced standards.